|

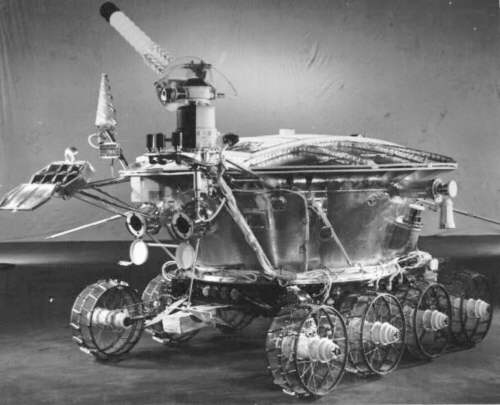

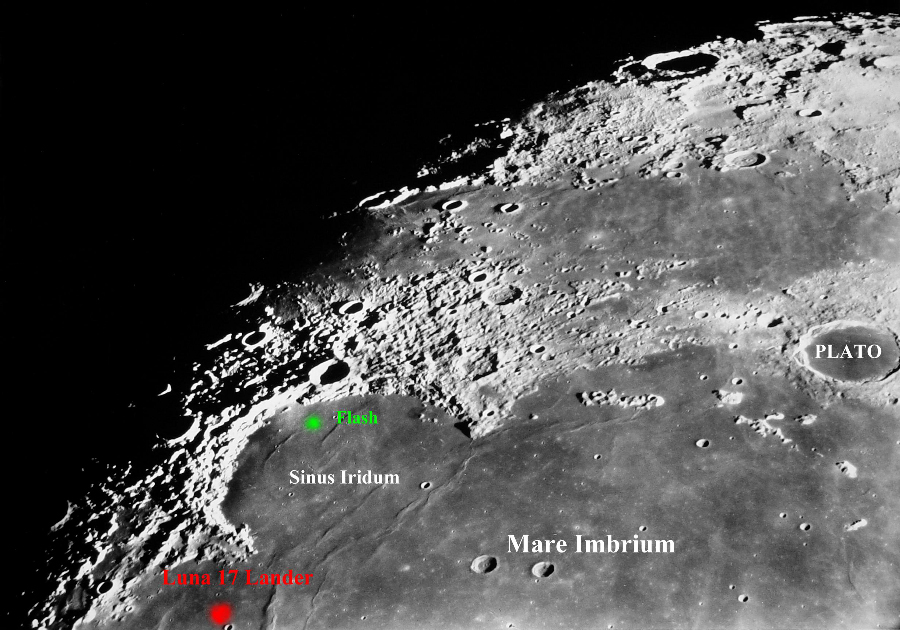

This is interesting for a couple of reasons. Of the 5 Laser Ranging Reflectors on the Moon, 2 from the Russians (Lunokhod 1 & 2) and one each from Apollo 11, 14 and 15, only 4 are working. Lunokhod 1 has been MIA since 1971. But maybe not anymore, the Russian Luna 17 carrying the rover landed just outside Sinus Iridum, where I captured this bright green flash. Well...not just outside, about 200km away.

|

|||

.. |

|||

(Click image for larger view) .. |

|||

| Lunokhod 1 did return some data and photos for a short

time but never returned a reflected laser beam because of calibration issues

and of course the main problem, they don't know where

it is!

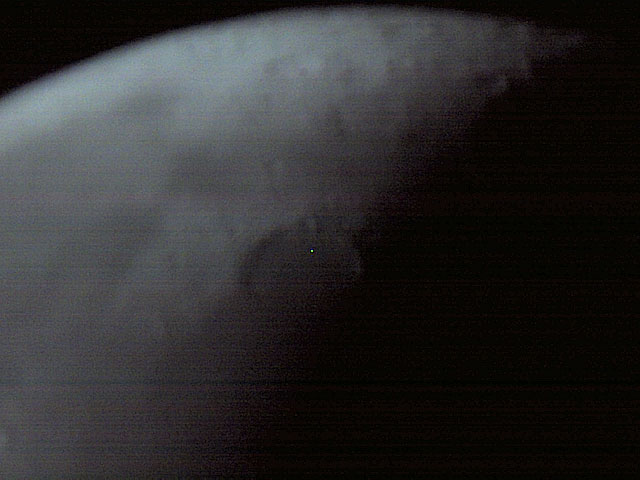

It disappeared after the signals became erratic and fewer. Some theorize it fell into a hole or is stuck, pointing down at a steep angle and couldn't receive signals. Others including me, think it lost it's ability to receive commands and may have continued in the direction it was going until losing power, blocked by something or a mechanical failure. It's relatively flat in the entire region and it's possible it may have had the capability of traveling a much farther distance than previously thought. The distance from the landing site to the flash I captured is approximately 200 km. That's seems like quite a distance for the little probe to travel without assistance. I don't have any theories on that yet. But we really don't know what it's potential range could have been. If it's located, it'll be the find of the century. The scientific community has been trying to locate it since it was "lost" in 1971... so they say. Lunokhod 2 is being used by NASA as a fourth Ranging Reflector in conjunction with our three for even more accuracy in determining the Moons distance and rate of orbit as it continually moves farther away from Earth. (3.8cm per year) Scientists send multiple pulses of laser beams for up to several hours. They couldn't get any data from a single flash except for that instant, if any at all. Another reason they send multiple pulses is that not all of the reflected pulses hit their target back here on Earth. This is because the field of light is much wider on its return....around nine miles wide. I'm not sure if these beams are in visible light wave frequencies but I'll check. UPDATE: I did more research and found the laser pulses are visible and are observed by scientists through the observatory scopes at the transmitting site during these data collecting sessions. I contacted the current A.P.O.L.L.O. (Apache Point Observatory Lunar Laser-ranging Operation) Project leader, and he told me consumer scopes aren't powerful enough to see them. (remember that statement) He also related to me that the scopes that do see the beams, need to be close to the target receivers and transmitters. My note: Hence the transmitter is scope-mounted for targeting ease and visual observation. Also the target array reflector panels on the Moon have to be adjusted frequently because of alignment deviation during transmission. (See Apache Point photos below) He also said the laser beams haven't been directed

to the Lunokhod 1 area for decades. Here is his rendition of the

#00695

JPG I sent him:

"Your image is fuzzy due to the usual influences, and any point source on the moon would be similarly fuzzy. Nothing real in the frame is as small as a single pixel so this is not an image of something on the moon". My Rebuttal; His first sentence is true for ambient light reflections, not for laser light-wave frequencies. My reply to the second sentence was: The field-of-view scaled image width, at the resolution I used, is about 900 miles. After doing the math, a single pixel is rendering a scaled image width of 1.42 miles. Plenty of area for "something real", we just can't see anything tangible, in that small of an area, in this scope... or yours for that matter... but it rules out... "nothing real is as small as a single pixel". And being the skeptic I am, I'm not going to trust

an organization's public explanation on everything they tell us. Wait 'til

you read the "explanation" another scientist gave me on my other

clip titled, "Possible Craft in Lunar Orbit".

My theory is... ...this is a reflected flash from one of the other observatory sessions which still may be scanning the area at random times and not reporting that fact. And possibly I was at the optimal angle to pick up a single reflection. The other observatories involved in these sessions are McDonald Observatory, Côte d'Azur Observatory in France and a couple more. But the LLR Project may be axed. As of 1999, the operation costs were $500,000 per year at each observatory. But millions have already been spent on all the equipment as well as newer upgrades since 1999, and is one of the better NASA projects in my opinion. Imagery can have a wide definition. Look at the Apache Point photos. These look to be taken with a low to medium-end digital camera. The middle photo shows the camera angle is well away from the beam's receiver but the beam is still visible on it's return from the Moon, and taken with an ordinary camera seemingly zoomed in. See what I mean about..."explanations" and the above statement on..."consumer scopes aren't powerful enough"? But a cheap digital camera is? So... it looks like you don't have to be that close. I'm trying to get a schedule of these sessions to try it out myself. I'll post the results. Update: They don't have timetable scheduled sessions. I was told they have the sessions when time allows and get only a few hours notice. I asked if they would notify me and they said, "No"...!? Well, excuse me! I hope the project does get scraped now! With yesterday's and today's technologies, and I believe the military and other agencies are already using tomorrow's, the rover should have been found. The recent Smart 1 Missioncomes to mind. And where is the archive of that mission's database? The crap they have on their site is context images. The imaging hardware on the Ion Solar Electric Propelled craft was extensive, expensive and could differentiate a basketball from a rock! Or should I say... a rover from a rock. And billions spent on just the R & D for the propulsion system. The craft carried seven hardware experiments, performing 10 investigations, including three remote sensing instruments. Military grade. So what did they do with it? What else?... crash it into the surface! This has become the fate of every piece of hardware we and others have sent, with the only exceptions being, early planned probe landings (although 30% of those slammed into the surface anyway) and manned missions. (See Pegasus Addition #1) And Clementine, which was rotating at 80 revolutions per minute somewhere on the far side where signals couldn't reach her. And eventually gravity prevailed. That's their "explanation" anyway. They claim a faulty circuit fired one retro rocket used for maintaining altitude and that sent her into a spin until all the fuel was exhausted. That would have sent her towards the Sun, not into a spin. And the onboard imaging hardware should have revealed far more than what's posted. I think one of two things happened: Something else stopped her operation and she met the surface on highly disagreeable terms. Or she's still there and operational but on a different mission. After all, Clementine was no lady. She was equipped with the latest spy imagery available. And she was designed to return. They also claim she finished her mission in mapping the Moon. Well.... where's the hi-res close ups of the far side? The answer I got was..."Oh, most of those had data loss due to weak signals". Do you buy that? I sure don't. Granted, Smart 1 was an ESA project but we were and are, heavily involved. And what... no thought whatsoever about bringing even some of them back to our orbit for retrieval? Or at the very least, have them configured for a soft landing on the Moon's surface for future study when trips there and back are common? |

|||

|

Pegasus Addition #1: New Tactics for Space Scientists: NASA Deliberately Crashing Satellites NASA's 'Flyby Shooting' of Venus and More Satellites Plowed into Planets Air Force Had Plans to Nuke Moon, Plutonium Spacecraft and Project Lucifer |

|||

|

Pegasus Addition #2: Clementine Satellite Image Galleries Clementine Color ASU Images released for first time Wherefore Art Thou Clementine? The Truth About Clementine The following excerpts are from a DoD News Briefing released on Oct 2006 on Defense Link website Subject: Discovery of Ice on

the Moon

"The Clementine Project, first of all, let me tell you was a joint project. Something very good took place in the government. The Ballistic Missile Defense Organization was in charge of the project. The Naval Research Laboratory designed and built the satellite. Lawrence Livermore Laboratory built the sensor package that was on board. Finally, NASA contributed by financial support of the scientific team that analyzes the data, as well as providing to us the use of the Deep Space Network array of antennas to receive the transmissions back from the Clementine. So this was truly a joint government operation between many arms of the U.S. Federal Government." - Dr. Dwight Duston I'd just like to say why the Clementine mission was done by the Ballistic Missile Defense Organization and the relevance that it has. Back in the late 1980s, the Ballistic Missile Defense Organization -- at that time the Strategic Defense Initiative Organization -- built a lot of advanced technologies. These technologies were about an order of magnitude smaller than anything that was available at the time. The reason why these technologies were developed is because we needed to do space defense. There was a great emphasis at that time for space ballistic missile defense, that we needed to build a small satellite to provide space defense. So many cameras, many navigation and guidance systems were built along those lines. - Colonel Rustan Q: Where is Clementine now? A: The spacecraft, as you know, from the name Clementine, is only supposed to be here for a short period of time and be lost and gone forever, so it was intended for a very short period of time after this lunar mission, did a rendezvous with the earth, and shortly after that was shifted by the moon's gravity and continued a flight which will bring it back near the earth about nine years from now. So it's an 11 year total flight around the sun. So basically it's moving like a little planet around the sun, and it will bring it back close to us in about nine years... It's two years since it left us so it will be another nine years before it's back. But it's not useful right now. The mission is finished. Q: But unlike it's namesake, it's not lost and gone forever. It will be back? A: It will be back, but it's not a useful spacecraft any more. Q: What's the presumptive volume of it then, and how did you discern that? A: As I mentioned, what we can tell from looking at the radar return is roughly the area that is covered by this. Assuming it reflects ice like ice on Mercury -- making that assumption. That's been well looked at. Then in order to see this back scatter effect, this roadside reflector effect; it's estimated that we have to see some number of wavelengths of our radar into the ice. In reviewing the paper, several of the reviewers posited we probably need to see somewhere between 50 and 100 wavelengths. So our wavelength is about six inches. So at the thickest case, it's roughly 50 feet. Q: That translates to what in volume? A: We were very conservative in the press release, but if you take basically 100 square kilometers by roughly 50 feet, you get a volume of something like a quarter of a cubic mile, I think it's on that order. It's a considerable amount, but it's not a huge glacier or anything like that. Q: Can you compare that with something you know? A: It's a lake. A small lake. U.S. Department of Defense

|

|||

|

Apache Point Observatory

..

..

APOLLO (Apache Point Observatory Lunar Laser-ranging Operation) |

|||

| It should also be noted that I'm aware of the "hot

pixel" anomaly prevalent in CCD sensors. However, I'm using a CMOS sensor

equipped camera just for that reason. I personally have not experienced

this anomaly in CMOS sensors and it has not occurred in this camera

before or since. If it does occur again, I will post that event here as

an update to let everyone know.

The hot pixels I've experienced in CCD's stay on and eventually burn-out to what's known as a "dead pixel". Also, in my case, the pixels were either blue or red. As I write this, my LCD monitor has one red hot pixel and two dead pixels. If this anomaly is present in CMOS sensors, I have not experienced it as yet, unless this frame is the first. I've looked around the net and have found other sites that discuss and explain this for CCD sensors but not CMOS. I had the camera checked and the sensor was found not to be defective, damaged (except for the scratch) or have any voltage issues. However, I do state in the video that I have to assume this is a hot pixel. But I don't anymore so I'm going to remake this. |

|||

|

(Cropped and annotated 640x480)

__________________________________________________________________________________________ .. |

|||

Extracted Frame (46Kb JPG) Note: Movie file is 30 fps and flash was captured on only one frame #00695 Original (900Kb BMP) ____________________________________________________________________________________________ |

|||

| FAIR USE NOTICE: This page contains copyrighted material the use of which has not been specifically authorized by the copyright owner. Pegasus Research Consortium distributes this material without profit to those who have expressed a prior interest in receiving the included information for research and educational purposes. We believe this constitutes a fair use of any such copyrighted material as provided for in 17 U.S.C § 107. If you wish to use copyrighted material from this site for purposes of your own that go beyond fair use, you must obtain permission from the copyright owner. | |||

|

|